The Need for Clarity in Artificial Intelligence

As artificial intelligence (AI) becomes increasingly integrated into our daily lives, the demand for transparency and understanding of AI decision-making processes grows. Explainable AI (XAI) emerges as a critical solution to demystify the complex algorithms behind AI systems.

Demystifying the Black Box

Traditional AI models often operate as “black boxes,” making it challenging for users to comprehend how they arrive at specific conclusions or decisions. Explainable AI aims to lift the veil on this opacity, providing insights into the inner workings of AI algorithms and making them more interpretable for both experts and non-experts.

Enhancing Trust and Accountability

In domains where AI impacts critical decision-making, such as healthcare, finance, and autonomous systems, trust and accountability are paramount. Explainable AI fosters trust by offering clear explanations for AI-generated outcomes, allowing users to understand, verify, and, if necessary, challenge the decisions made by AI systems.

Bridging the Gap Between Humans and AI

Explainable AI serves as a bridge between the capabilities of AI systems and human comprehension. By providing interpretable explanations, XAI enables collaboration between AI algorithms and human users, encouraging a more symbiotic relationship. This collaborative approach is essential for harnessing the full potential of AI in various applications.

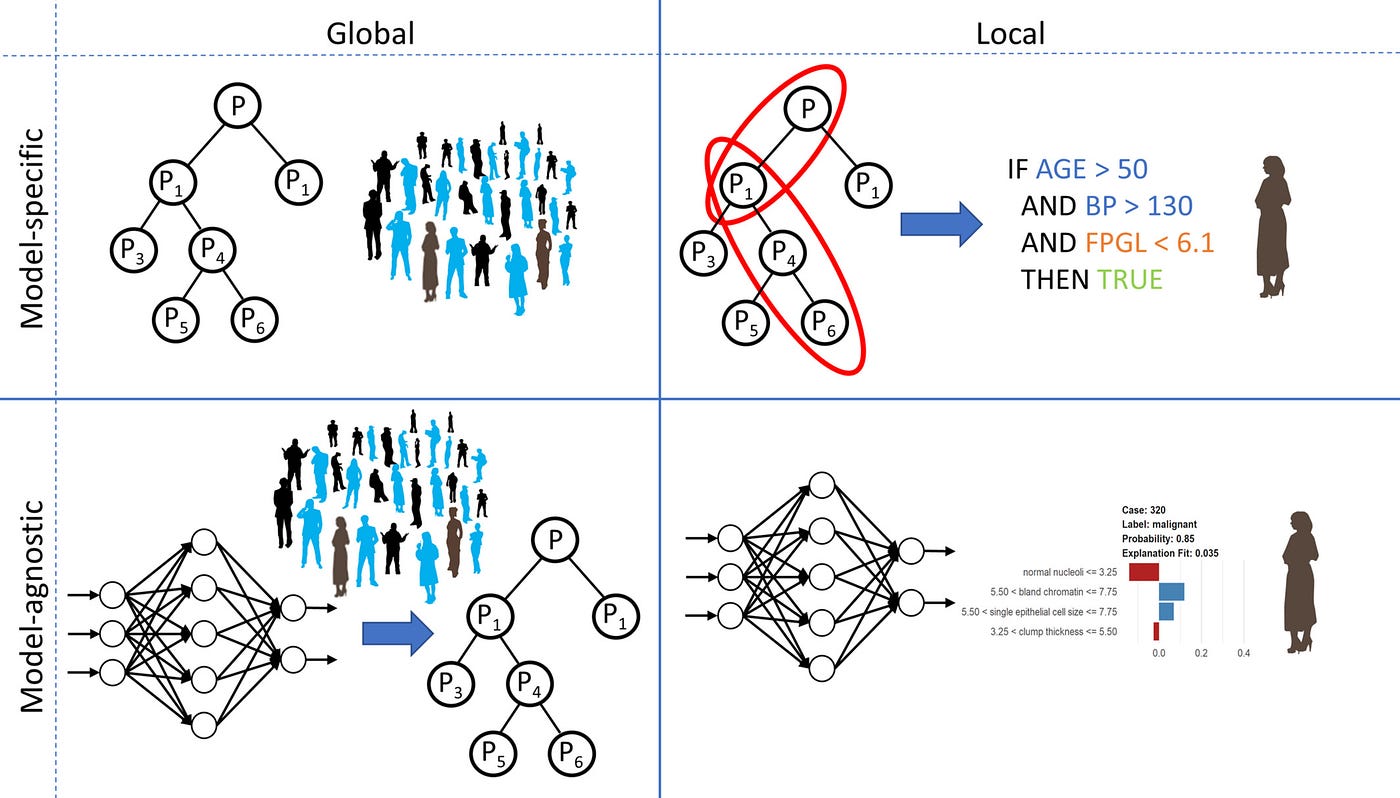

Types of Explainability Techniques

Explainable AI employs various techniques to make AI decisions more understandable. These include feature importance analysis, model-agnostic methods, and generating human-understandable rules. Each technique addresses different aspects of interpretability, catering to diverse user needs and application requirements.

Real-world Applications of Explainable AI

Explainable AI is gaining prominence in industries where the interpretability of AI decisions is crucial. In healthcare, XAI helps clinicians understand the rationale behind medical diagnoses, enhancing the acceptance of AI-assisted decision support. Similarly, in finance, transparent AI algorithms aid in risk assessment and investment strategies.

Ethical Considerations in AI Transparency

As we embrace Explainable AI, ethical considerations come to the forefront. Striking a balance between transparency and protecting sensitive information is crucial. Ensuring that XAI methods do not compromise privacy or inadvertently reveal proprietary algorithms is an ongoing challenge that requires careful navigation.

The Role of Explainability in Regulatory Compliance

Explainable AI aligns with the growing emphasis on regulatory compliance in the AI landscape. Regulatory bodies are increasingly recognizing the importance of transparent AI systems, and the integration of XAI can facilitate adherence to evolving regulations while ensuring responsible and ethical AI deployment.

Advancing Explainable AI Research

The field of Explainable AI is dynamic, with ongoing research aimed at improving existing methods and developing new approaches. Researchers are exploring ways to enhance the interpretability of complex deep learning models and extend explainability to novel AI architectures, contributing to the continual evolution of XAI.

Navigating the Future with Explainable AI

In conclusion, Explainable AI is a crucial component in the evolution of artificial intelligence. As we navigate the complex intersection of technology and human understanding, XAI emerges as a guiding light, ensuring that AI systems are not only powerful but also accountable, transparent, and aligned with human values. To delve deeper into the realm of Explainable AI (XAI), visit our comprehensive guide for a more in-depth exploration.